Set up an anti-spam filter for your website with mod_security and fail2ban

Frequently spammers target products such as wordpress, web forum software, phpMyAdmin, and other common tools used by hobbyist and professional website administrators.

Whether you are hosting your own blog, or running a website for your company or more, it can be difficult to deal with the increasing amount of malicious web traffic seen on a daily basis, while still allowing friendly crawlers such as Google, Yahoo, and MSN search engines.

This harmful and wasteful traffic may damage your system or simply waste its resources, slowing down the site for your more welcome users. If this sounds familiar to you, but your page hits don’t seem to add up, then you may want to consider taking some of the measures outlined below in order to secure your site from harmful hacks and sluggish spam.

Get set up

First things first, of course – installation is where we begin. mod_security and fail2ban are not new technologies, so we will be turning to some existing tutorials for our first getting started steps. We will then continue to tweak these tools to allow traffic from search engines and friendly crawlers. There is a fantastic tutorial on how to set up mod_security over here at thefanclub.co, which I highly recommend using if you are on a debian/ubuntu system.

If you are on Fedora, RHEL, or CentOS (eww), then the setup is a little bit simpler for mod_security and for fail2ban; however, you will still want to install the OWASP mod_security core rule set (crs).

/etc/init.d/apache2 restart

Tweaking mod_security to allow googlebot, yahoo, and msn

There are a few things that we do NOT want to do with mod_security, and the first of which is block search engine’s crawlers when they try to access our site. Their log entries will look like the example below, which creates a difficult situation. Because the IP address is informationally logged in a format that fail2ban will later pick up and try to block, we will need to find a way to prevent logging, or tell fain2ban NOT to block it.--7f70c037-A-- [08/Apr/2013:10:43:17 --0400] UWLXhH8AAAEAAHFfJ@QAAAAD 66.249.73.107 46478 67.23.9.184 80 --7f70c037-B-- GET /not-there HTTP/1.1 Host: ocpsoft.org Connection: Keep-alive Accept: */* From: googlebot(at)googlebot.com User-Agent: Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html) Accept-Encoding: gzip,deflate --7f70c037-F-- HTTP/1.1 404 Not Found X-Powered-By: PHP/5.3.10-1ubuntu3.4 X-Pingback: http://ocpsoft.org/xmlrpc.php Expires: Wed, 11 Jan 1984 05:00:00 GMT Cache-Control: no-cache, must-revalidate, max-age=0 Pragma: no-cache Vary: Accept-Encoding Content-Encoding: gzip Content-Length: 5986 Keep-Alive: timeout=15, max=100 Connection: Keep-Alive Content-Type: text/html; charset=UTF-8 --7f70c037-H-- Message: Warning. Pattern match "(?:(?:gsa-crawler \\(enterprise; s4-e9lj2b82fjjaa; me\\@mycompany\\.com|adsbot-google \\(\\+http:\\/\\/www\\.google\\.com\\/adsbot\\.html)\\)|\\b(?:google(?:-sitemaps|bot)|mediapartners-google)\\b)" at REQUEST_HEADERS:User-Agent. [file "/etc/modsecurity/activated_rules/modsecurity_crs_55_marketing.conf"] [line "22"] [id "910006"] [rev "2.2.5"] [msg "Google robot activity"] [severity "INFO"] Apache-Handler: application/x-httpd-php Stopwatch: 1365432196888162 1004514 (- - -) Stopwatch2: 1365432196888162 1004514; combined=6736, p1=442, p2=6031, p3=0, p4=0, p5=261, sr=163, sw=2, l=0, gc=0 Producer: ModSecurity for Apache/2.6.3 (http://www.modsecurity.org/); OWASP_CRS/2.2.5. Server: Apache/2.2.22 (Ubuntu) --7f70c037-Z--

Replace the rule action block with the action pass. This tells mod_security not to immediately block a request where something looking like a search bot is involved, but rather keep adding up the attack score until it reaches the threshold.

# ---------------------------------------------------------------

# Core ModSecurity Rule Set ver.2.2.5

# Copyright (C) 2006-2012 Trustwave All rights reserved.

#

# The OWASP ModSecurity Core Rule Set is distributed under

# Apache Software License (ASL) version 2

# Please see the enclosed LICENCE file for full details.

# ---------------------------------------------------------------

# These rules do not have a security importance, but shows other benefits of

# monitoring and logging HTTP transactions.

# --

SecRule REQUEST_HEADERS:User-Agent "msn(?:bot|ptc)" \

"phase:2,rev:'2.2.5',t:none,t:lowercase,pass,msg:'MSN robot activity',id:'910008',severity:'6'"

SecRule REQUEST_HEADERS:User-Agent "\byahoo(?:-(?:mmcrawler|blogs)|! slurp)\b" \

"phase:2,rev:'2.2.5',t:none,t:lowercase,pass,msg:'Yahoo robot activity',id:'910007',severity:'6'"

SecRule REQUEST_HEADERS:User-Agent "(?:(?:gsa-crawler \(enterprise; s4-e9lj2b82fjjaa; me\@mycompany\.com|adsbot-google \(\+http:\/\/www\.google\.com\/adsbot\.html)\)|\b(?:google(?:-sitemaps|bot)|mediapartners-google)\b)" \

"phase:2,rev:'2.2.5',t:none,t:lowercase,pass,msg:'Google robot activity',id:'910006',severity:'6'"Look for other problems

You will also want to review the audit log and make sure that things look normal. If you are seeing a lot of outbound errors, it’s possible that you’ve been hacked. What is more likely, however, is that you have a bug in your web application and it is simply not playing nice with HTTP; you may not be completely standards compliant:Found a match for 'Message: Warning. Match of "rx (<meta.*?(content|value)=\"text/html;\\s?charset=utf-8|<\\?xml.*?encoding=\"utf-8\")" against "RESPONSE_BODY" required. [file "/etc/modsecurity/activated_rules/modsecurity_crs_55_application_defects.conf"] [line "36"] [id "981222"] [msg "[Watcher Check] The charset specified was not utf-8 in the HTTP Content-Type header nor the HTML content's meta tag."] [data "Content-Type Response Header: application/xml"] [tag "WASCTC/WASC-15"] [tag "MISCONFIGURATION"] [tag "http://websecuritytool.codeplex.com/wikipage?title=Checks#charset-not-utf8"] ' but no valid date/time found for 'Message: Warning. Match of "rx (<meta.*?(content|value)=\"text/html;\\s?charset=utf-8|<\\?xml.*?encoding=\"utf-8\")" against "RESPONSE_BODY" required. [file "/etc/modsecurity/activated_rules/modsecurity_crs_55_application_defects.conf"] [line "36"] [id "981222"] [msg "[Watcher Check] The charset specified was not utf-8 in the HTTP Content-Type header nor the HTML content's meta tag."] [data "Content-Type Response Header: application/xml"] [tag "WASCTC/WASC-15"] [tag "MISCONFIGURATION"] [tag "http://websecuritytool.codeplex.com/wikipage?title=Checks#charset-not-utf8"]

Recovering from fail2ban – Unblocking an IP address

From time to time, you may sometimes need to un-ban an IP address, in which case, you’ll want this handy command, which tells IPtables to dump out a list of all current rules and blocked IPs to the console:fail2ban-regex /var/log/apache2/modsec_audit.log "FAIL_REGEX" "IGNORE_REGEX" fail2ban-regex /var/log/apache2/modsec_audit.log "\[.*?\]\s[\w-]*\s<HOST>\s" "\[.*?\]\s[\w-]*\s<HOST>\s"

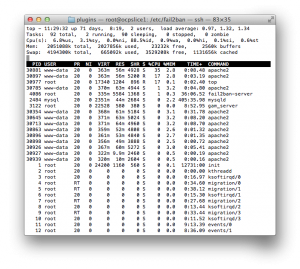

user@server ~ $ sudo iptables -L -n [sudo] password for lb3: Chain INPUT (policy ACCEPT) target prot opt source destination fail2ban-ModSec tcp -- 0.0.0.0/0 0.0.0.0/0 multiport dports 80,443 fail2ban-ssh tcp -- 0.0.0.0/0 0.0.0.0/0 multiport dports 22 Chain FORWARD (policy ACCEPT) target prot opt source destination Chain OUTPUT (policy ACCEPT) target prot opt source destination Chain fail2ban-ModSec (1 references) target prot opt source destination DROP all -- 77.87.228.68 0.0.0.0/0 DROP all -- 213.80.214.171 0.0.0.0/0 DROP all -- 70.37.73.28 0.0.0.0/0 DROP all -- 2.91.50.184 0.0.0.0/0 Chain fail2ban-ssh (1 references) target prot opt source destination RETURN all -- 0.0.0.0/0 0.0.0.0/0 user@server ~ $

fail2ban-ModSec. This is going to be important later, so write this down.

You may then want to extract the IP address from this list so you can pass it to a command line by line:

iptables -L -n

xargs command and perform the unbanning.

iptables -L -n | grep DROP | sed 's/.*[^-]--\s\+\([0-9\.]\+\)\s\+.*$/\1/g'

Reset all IPtables rules

You can always reset IPtables rules and let fail2ban start re-populating by running the following set of commands, or turning them into a script. (Source cybercity) A) Create /root/fw.stop /etc/init.d/fw.stop script using text editor such as vi:

#!/bin/sh echo "Restarting firewall and clearing rules" iptables -F iptables -X iptables -t nat -F iptables -t nat -X iptables -t mangle -F iptables -t mangle -X iptables -P INPUT ACCEPT iptables -P FORWARD ACCEPT iptables -P OUTPUT ACCEPT

Make it executable:

iptables -L -n | grep DROP | sed 's/.*[^-]--\s\+\([0-9\.]\+\)\s\+.*$/\1/g' | xargs -i{} iptables -D fail2ban-ModSec -s {} -j DROP Now you can run:

chmod +x /root/iptables-reset

Conclusion

Please feel free to post comments, improvements, or extensions to this article. I hope that you now have a grasp on some of the fundamental principals of securing the website, while still allowing friendly traffic, but there is obviously a world of work to be done here to achieve a tolerant yet secure rule set.

Again, I recommend making sure that your whitelists are set up BEFORE you turn any of these features on. But once you do flip the switch, you should be happy with a faster, cleaner server.

This only scratches the surface of web application security, of which there are many types, from the server all the way to the application, but the more layers you can get that still work together for users nicely, the better. If you are interested in Java application level security, I have a few more posts on that topic.

Posted in OpenSource, Security, Web Services

Lincoln Baxter III

Lincoln Baxter III